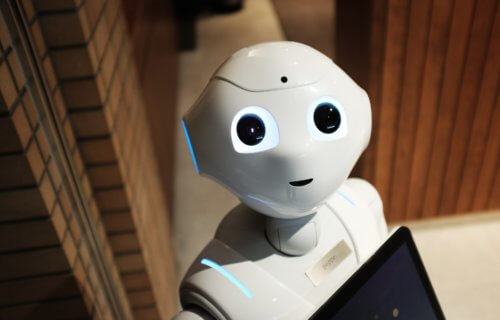

SOUTHAMPTON, United Kingdom — As robots and artificial intelligence become a common sight in society, could they secretly be pushing us into making more questionable decisions? Researchers in the United Kingdom say, during a gambling simulation, robots can actually influence human behavior and push people to take greater risks.

“We know that peer pressure can lead to higher risk-taking behavior. With the ever-increasing scale of interaction between humans and technology, both online and physically, it is crucial that we understand more about whether machines can have a similar impact,” says Dr. Yaniv Hanoch from the University of Southampton in a university release.

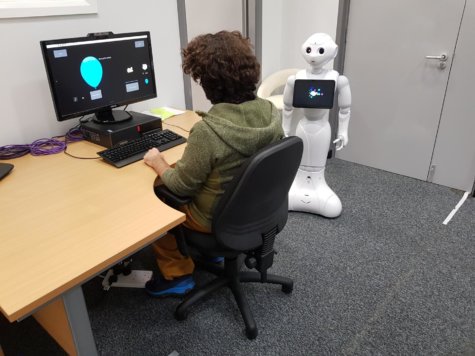

Researchers studied 180 undergraduates who took part in the Balloon Analogue Risk Task (BART). The computer-based challenge asks participants to press the spacebar on a keyboard to inflate a virtual balloon. With each press of the spacebar, the digital balloon would inflate slightly and add one penny into the player’s “temporary money bank.”

Unfortunately for the participants, the balloon could pop randomly, meaning the more air you pump in the greater the chances are it’ll explode and cost the player their winnings. Each person could also play it safe and “cash-in” before letting their balloon pop.

Robotic peer pressure

During the experiment, researchers split the students into three groups. One group served as the control with no robot in the room with them. One third of the students took the test with a robot present who only gave them instructions on how to play the game and then remained silent. The final third of the volunteers played the game with a robot next to them who kept making encouraging comments like “why did you stop pumping?”

The results reveal that the students who kept getting egged on to inflate the balloon took more risks. They blew up their balloons much more frequently than the other two groups and also earned more money for it. The two teams with a silent robot and no robot at all both performed about the same and showed little difference in their behavior.

“We saw participants in the control condition scale back their risk-taking behavior following a balloon explosion, whereas those in the experimental condition continued to take as much risk as before. So, receiving direct encouragement from a risk-promoting robot seemed to override participants’ direct experiences and instincts,” Dr. Hanoch reports.

‘Urgent attention from research community’ needed

Study authors say these findings point to a need to examine the growing interactions between man and machine; to see if digital assistants or on-screen avatars encourage behavioral changes in other fields besides gambling.

“With the wide spread of AI technology and its interactions with humans, this is an area that needs urgent attention from the research community,” Dr. Hanoch concludes.

“On the one hand, our results might raise alarms about the prospect of robots causing harm by increasing risky behavior. On the other hand, our data points to the possibility of using robots, and AI, in preventive programs such as anti-smoking campaigns in schools, and with hard to reach populations, such as addicts.”

The study appears in the journal Cyberpsychology, Behavior, and Social Networking.